About Me

Focusing on journalism, I understand that media literacy is vital to becoming a professional in this field. With the knowledge Arizona State University has provided me, I have begun pursuing opportunities to establish myself as a journalist. I aspire to become a public relations agent, helping clients navigate the media landscape through a journalistic lens. By combining strategic communication skills with journalistic principles, I aim to craft compelling narratives that resonate with diverse audiences.

Throughout my studies, I have developed proficiency across multiple Adobe Creative Suite platforms. I am skilled in Premiere Pro for video editing, Lightroom for photo enhancement, Audition for audio production, and Photoshop for graphic design. These principles enable me to deliver comprehensive multimedia projects for clients, meeting the dynamic demands of modern social media platforms. I am well-equipped to create engaging content that aligns with current digital marketing strategies and best practices.

My work

During my time at the Cronkite Agency, I had the opportunity to work with Follett/Sun Devil Campus Stores as my client. In this role, I’ve been responsible for highlighting and showcasing their key products through press releases, three of which I have successfully submitted to date.

Black History Month

Sustainability

Women’s History Month

Articles

Articles

- I’m a journalist covering state and national public affairs for news outlets. Here are some examples of my recent work:

- Cactus Politics

- Texas Politics

- The Floridan Press

- Big Energy News

Media Day in the Life

7:30 AM: My mom had already sent me a TikTok of a mom at church making Palm Sunday crosses out of palm leaves. It was genuinely sweet and wholesome, exactly the kind of content moms send on Sunday mornings.

12:00 p.m.: Scrolled across the Druski video on TikTok, the one where he’s doing a mock impression of a conservative white woman in America. The video is clearly satire but what caught my attention was the conversation surrounding it. There have been growing theories that TikTok’s algorithm is actively suppressing the video and limiting its reach on people’s For You pages, while the same clip is gaining significantly more traction on X.

This was the most interesting piece of potentially questionable content I came across today. The claim circulating is that TikTok is deliberately suppressing Druski’s satirical video, specifically because it mocks conservative viewpoints, while the video spreads freely on X. On the surface this fits into a larger ongoing narrative about TikTok censoring politically adjacent content, which has been a talking point for a while now.

1:30 p.m.: Read a New York Times article about the “No Kings” protests happening across the country. This was straightforward news reporting from a well established outlet, covering the wave of demonstrations pushing back against what organizers are calling an overreach of executive power.

Now, The New York Times is a well established, Pulitzer Prize winning news organization with editorial standards and a clear corrections policy. The article itself cited named sources, included photos from multiple protest locations, and linked to verifiable event information. No credibility concerns here. This is straightforward news reporting.

3:00 p.m.: Came across a report on KVOA.com, a local Tucson news station, about a teacher and coach being charged with 10 counts of sexual exploitation of a minor. Disturbing story but reported by a legitimate local news source.

Furthermore, KVOA is a legitimate NBC affiliate local news station serving the Tucson, Arizona area. The story about the teacher and coach charged with sexual exploitation of a minor cited court records and law enforcement statements, which are public record. Credible report from a credible local source.

5:00 p.m.: Watched the trailer for the upcoming “The Super Mario Galaxy Movie” on the Nintendo website. It was fun, completely low stakes media consumption.

Reflection:

Honestly I was surprised by how relatively low-questionable my media day was compared to what I expected going in. Most of what I consumed was either entertainment, lighthearted lifestyle content, or news from legitimate outlets. The one piece that genuinely gave me pause was the Druski suppression theory, and what’s interesting about that is it didn’t come from a sketchy website or a random meme, it came through casual conversation in TikTok comment sections and on X, which in some ways makes it harder to fact-check because there’s no single claim to trace back to a source.

That’s actually the pattern I noticed most today which was the questionable content wasn’t coming from fake news sites or obvious misinformation accounts. It was showing up in the spaces between content, in comment sections, in captions, in theories shared person to person. That kind of information is way harder to verify and way easier to absorb without thinking critically about it, because it doesn’t feel like “news.” It just feels like people talking.

I also noticed I’m more likely to fact-check things that already feel suspicious to me going in, which is its own kind of bias. If a claim fits something I already believe, I’m less likely to question it. That’s something I want to actively work against. Being media literate isn’t just about spotting obvious misinformation, it’s about applying the same level of scrutiny to everything, including the stuff that already agrees with you.

Evaluating Misinformation Education Tools

When I first opened RumorGuard, I wasn’t sure what to expect, but I ended up spending way more time on it than I planned to. Scrolling through the debunked rumors felt a little like doom-scrolling, except actually productive. What got me was how many of the rumors looked completely believable at first glance. Some of them were things I had genuinely seen floating around before without ever questioning them, which was a little humbling.

Reading through the explanations from the News Literacy Project team made me realize that the problem isn’t always that people are gullible, it’s that misinformation is deliberately crafted to look credible. The experience left me way more aware of how quickly I usually scroll past something without stopping to think about where it came from or whether it’s actually true. If I’m being critical, the site works best if you already have some motivation to engage with it, it’s not going to grab someone who isn’t already curious. But for building the habit of pausing before you believe or share something, it genuinely works.

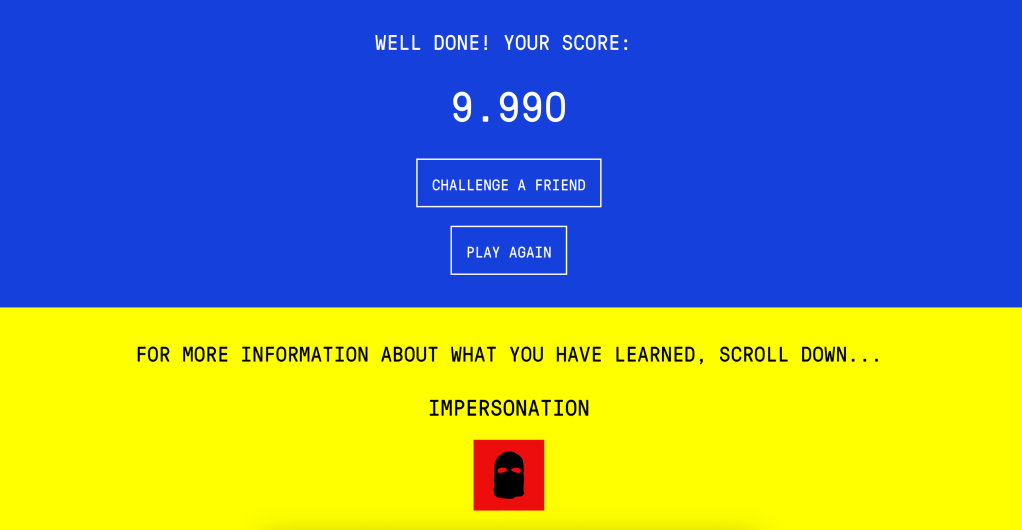

Playing Bad News was a strange experience in the best way. From the very first few clicks, I was already making choices I knew were manipulative, writing misleading headlines, picking the most emotionally charged wording, targeting people’s fears, and watching my follower count climb because of it. It felt weirdly satisfying in the moment, which is probably the most uncomfortable and important takeaway from the whole thing.

The further I got into the game, the more I started to recognize patterns in what actually “worked” to gain followers. Emotional content spread faster than factual content every single time. Impersonating a credible source made people trust me instantly without questioning it. Pushing polarizing narratives kept people engaged and angry, which turned out to be just as good as keeping them informed, maybe better. By the time I hit a score of 9,990 and had earned all six badges, Impersonation, Emotion, Polarization, Conspiracy, Discredit, and Trolling, I felt like I had genuinely learned something about how the internet works, just not in a way any textbook would teach it.

What made it hit differently than just reading about misinformation tactics is that I had to make active choices to use them. There’s something about deciding to manipulate people, even in a game, that makes you way more alert to when it’s happening to you in real life. A 2019 study in Royal Society Open Science confirmed this, finding that players came out significantly better at spotting manipulation after playing. It’s based on inoculation theory, the same logic behind vaccines, applied to media literacy.

After using both, I think the reason they work is because they make you an active participant instead of a passive reader. Research from Stanford has shown that most people are genuinely bad at evaluating online sources, so clearly just knowing misinformation exists doesn’t cut it. RumorGuard makes you practice skepticism in real time, and Bad News makes you feel what manipulation looks like from the inside. That combination, checking your instincts on one end and understanding the tactics on the other, is way more effective than any amount of lecturing on the subject could be.

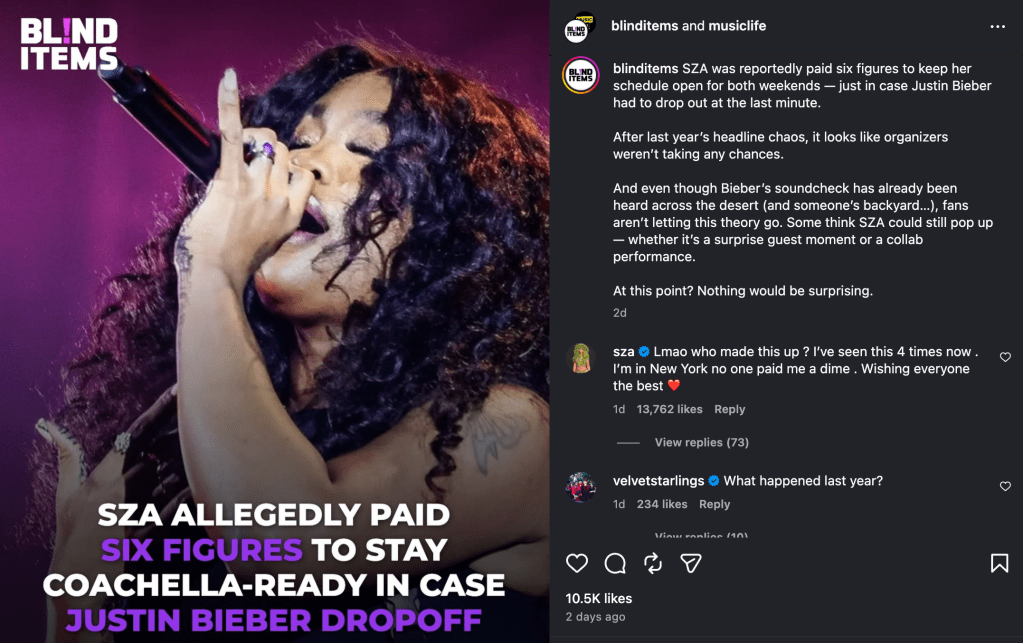

Did You Hear SZA Was Paid to Be Justin Bieber’s Coachella Backup? Let’s Break That Down.

This weekend I was scrolling through Instagram recently when I came across a post claiming that SZA was allegedly paid six figures to stay “Coachella-ready” just in case Justin Bieber backed out of his headlining performance. As someone who follows both artists, my first reaction was just confusion. It felt like one of those things that sounds just believable enough to spread, but also kind of off. That mix of “maybe?” and “wait, really?” is usually my sign to actually look into something before believing or sharing it.

Step 1: Look at Where the Claim is Coming From

The original post came from an Instagram account called Blind Items paired with a page called “Music Life.” Right away that raised a flag. “Blind Items” is literally built around unverified celebrity gossip, rumors posted without naming sources or providing proof. The headline also used the word “allegedly,” which signals that nobody actually confirmed anything. That word alone should always make you pause before sharing something.

Step 2: Check if Anyone Credible is Reporting It

I did a quick search to see if any well known outlets were covering this story. When something is actually true, especially something as specific as a six-figure celebrity deal, you would expect sources like Billboard or Rolling Stone to be reporting it. I found nothing from any verified news source backing the claim up. If a story is only living on gossip Instagram pages and nowhere else, that is a strong sign it has not been confirmed by anyone with actual accountability.

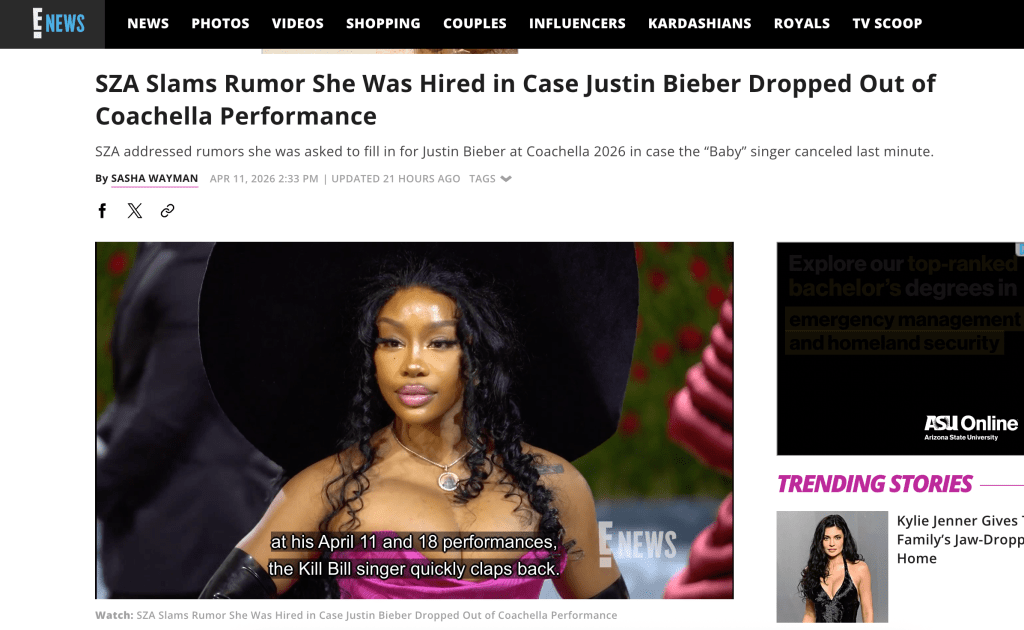

Step 3: Go to Primary Sources

This is the most important step and honestly the one that settled everything for me. Not only did SZA comment directly on the original Instagram post calling the rumor out, but she also personally stated she was in New York and had not been paid a single thing. That comment on the actual post where the rumor started is about as primary as a source gets. On top of that, E! News covered her direct response and quoted her full comment for anyone who missed it. When the actual person a rumor is about goes out of their way to deny it twice, in two different places, that is a pretty definitive conclusion.

Step 4: Think About Why It Spread

Even after knowing it was false, it is worth asking why this rumor gained traction. Coachella 2026 had a lot of buzz around Justin Bieber headlining after a long public absence due to health issues. People were already uncertain about whether he would follow through, which created the perfect environment for a rumor like this to feel believable. Misinformation spreads fastest when it taps into something people are already worried about or curious about.

Conclusion

After going through these steps, the claim was clearly unverified gossip that SZA herself shut down in multiple places. But beyond this specific rumor, the bigger takeaway is that pausing before you repost something is always worth it. Taking two minutes to check the source, search for other coverage, and look for a direct response can save you from spreading something completely false. If a headline uses words like “allegedly” and only appears on gossip pages, that is your sign to dig deeper before hitting share.

Fighting Fake News: How X and Discord Are (Trying to) Handle Misinformation

If you’ve spent any time online in the last few years, you’ve probably seen something that made you think, “wait, is that actually true?” Whether it’s a wild claim or video that looks real but totally isn’t, misinformation is everywhere. Two platforms I use pretty regularly are X and Discord, and honestly, their approaches to handling this problem couldn’t be more different. One is very public and controversial, the other is more behind the scenes and honestly kind of easy to forget about. Let’s break down what each of them is actually doing, and what they should be doing better.

X:

When Elon Musk took over Twitter in 2022 and rebranded it as X, one of the biggest changes was gutting the platform’s traditional content moderation teams. The company cut employees who worked on election integrity and countering misinformation, loosened rules around hate speech, and reinstated accounts of extremists who had previously been banned. On top of that, X scrapped a user-reporting feature that had allowed people to flag posts as “misleading.”

So what replaced all of that? Basically one thing: Community Notes, a crowdsourced fact-checking system where regular users add context or corrections under posts they think are misleading. Notes are applied through a bridging-based algorithm that requires agreement from users on different sides of the political spectrum, rather than just a majority vote.

In theory, this is actually a cool idea. And there’s real research suggesting it can work, posts with public correction notes were 32 percent more likely to be deleted by their own authors than those that only received private notes. University of Rochester That means the system can pressure people into correcting themselves.

But the notes often don’t show up fast enough. “Content spreads rapidly across X, and if a note comes too late, few users will get a chance to see it. Notes that take 48 hours or so to go up have almost no effect.” During high-stakes moments, the system falls apart entirely. After the attempted assassination of Donald Trump in 2024, only five Community Notes were published to counter the 100 most popular conspiratorial posts about the shooting. Around the 2024 election, 74% of misinformation in a sample of posts failed to receive any notes at all.

From personal experience, Community Notes, when they appear, are actually pretty informative and usually link to credible sources. But I’ve also watched obviously false things go viral with zero notes attached, especially in those first viral hours. The system just can’t keep up.

Discord:

Discord is a totally different beast. While X is public, Discord is built around private or semi-private servers, basically invite-only group chats. This makes misinformation harder to see, but not harder to find.

Discord does have an official policy. The platform prohibits communities that share false or misleading health information likely to cause harm, including anti-vaccination claims and dangerous unsupported treatments, and relies on credible sources like the WHO and the CDC. There’s also a civic policy: Discord states it will remove harmful misinformation about civic processes when it becomes aware of it.

Sounds good, except enforcement is the problem. Discord’s content moderation strategy for private groups appears to be reactive rather than proactive, depending primarily on reports from users inside those groups rather than automatic filtering. If everyone in a server believes the misinformation, nobody reports it.

As a regular Discord user, I’ve seen this play out. Some servers have active mods and clear rules. Others are basically the wild west. The platform offloads almost all responsibility onto individual server owners, which works great sometimes and completely fails in others.

Potential Change

For X, the biggest fix needed is speed. Community Notes is a solid concept but too slow for viral misinformation. X should use AI to flag potentially false posts faster and prioritize them for contributor review. Research found that rapid algorithmic fact-checking, though imperfect, was more effective than slower professional fact-checking suggesting a hybrid AI-plus-human approach could be powerful.

For Discord, the reactive-only model isn’t enough. The platform should invest in proactive scanning tools that can detect misinformation patterns before users have to report anything. Requiring larger servers to maintain baseline moderation standards would also help close the gap.

Both X and Discord have made some effort, but neither has truly solved the problem. X is betting on a crowdsourced system that’s too slow. Discord is hoping users police themselves, which is asking way too much. Misinformation thrives in exactly these kinds of gaps, and closing them should be a priority, not an afterthought.

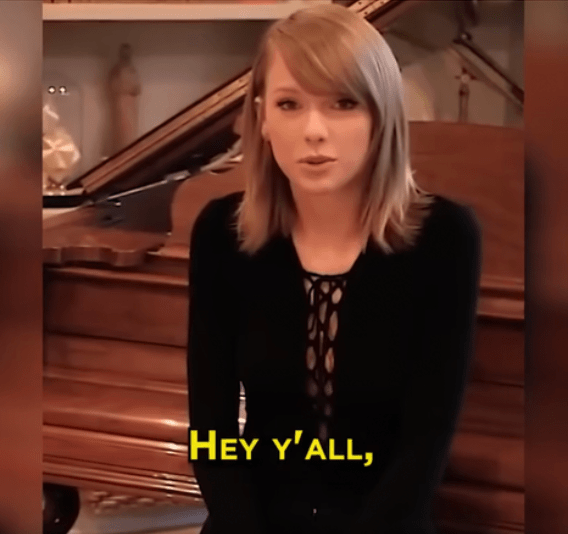

Your FYP is Lying to You

Okay, I’ll just say it, I’ve been fooled by a deepfake. More than once, probably. A while back a video showed up on my feed of Taylor Swift promoting a Le Creuset cookware giveaway. “Hey y’all, it’s Taylor Swift here,” she says in the clip. “Due to a packaging error, we can’t sell 3,000 Le Creuset cookware sets. So I’m giving them away to my loyal fans for free.” It sounded a little off, her voice had this slightly robotic cadence to it, but I didn’t think twice. I kept scrolling. Later I found out it was a completely AI-generated deepfake designed to trick people into clicking a link and handing over personal information. Le Creuset had nothing to do with it. And I just sat with that for a second, because even knowing something felt a little off, I hadn’t actually stopped to question it.

I’m writing this because I think that experience is really common right now, especially for people my age. We’re on our phones constantly, which means we’re exposed to a massive volume of video content every single day. We’ve gotten really good at processing it fast. But “processing fast” and “actually evaluating what’s real” are two very different things, and deepfake technology is specifically designed to exploit that gap.

So here’s what I actually want you to walk away with, not anxiety, not paranoia, just a few concrete things to look for and a better mental model of what’s happening when you scroll.

What even is a deepfake?

The word gets thrown around a lot but I want to make sure we’re starting from the same place. A deepfake is a video or audio clip that has been manipulated, or in some cases, entirely generated, using artificial intelligence, specifically a type called a generative adversarial network, or GAN. Basically, two AI systems run against each other, one tries to create fake content, and the other tries to detect whether it’s fake. They keep improving each other until the fake is convincing enough to pass. The result can be a video of a real person saying something they never said, or doing something they never did.

The term “deepfake” comes from combining “deep learning” (a type of AI) and “fake.” It started getting mainstream attention around 2017, but the tools have gotten dramatically more accessible since then. You don’t need to be a programmer or have expensive hardware anymore. A deepfake video can now be created for about $5 in under ten minutes. That’s a pretty significant shift in what the average person is capable of creating, and in what your average viewer is up against.

According to a 2023 McAfee survey, 70% of people said they weren’t confident they could tell the difference between a real video and a deepfake. And that data is a couple years old now. The technology has only gotten better since then.

Why should you care?

The obvious harm is misinformation, fake videos of politicians saying alarming things, fabricated clips that spread before anyone can debunk them. However, it goes further than that. Deepfakes are being used to impersonate celebrities in financial scams, like the Taylor Swift example above, or fake investment videos featuring Elon Musk, Prince William, and the UK Prime Minister. They’ve been used to create non-consensual intimate images of real people. They’ve been used to harass and embarrass private individuals. And they show up in our feeds mixed in with genuine content, often from accounts that look totally normal.

The part that really gets me is how deepfakes work with the way our social media feeds are designed. Algorithms reward things that get engagement fast, likes, shares, comments, emotional reactions. A shocking, convincing fake video can rack up thousands of shares before anyone bothers to check whether it’s real. In one documented case, a deepfake ad campaign featuring a Financial Times journalist reached nearly a million users across the EU in just six weeks. By the time a correction or debunk is posted, most of the people who saw the original have already moved on. That’s not a coincidence. It’s a feature of the system, even if it wasn’t intentionally designed to spread lies.

Okay but how do you actually spot one?

Here are the real visual cues that researchers and journalists use, things you can actually look for in the moment, not after you’ve already shared something.

Watch the edges of the face. Hair and the outline where the face meets the background are notoriously hard to fake. Look for blurring, weird flickering, or a halo effect at the hairline.

Look at the eyes. Blinking is often unnatural in deepfakes, too infrequent, too fast, or slightly off-rhythm. Also look for lighting inconsistencies, such as if the eyes reflect light the same way the rest of the face does?

Check the skin texture. AI-generated faces often look a little too smooth, especially in motion. Real skin has texture and pores that are difficult to replicate consistently across frames.

Pay attention to teeth and the inside of the mouth. This is one of the hardest things for AI to get right. Look for blurring, weird shapes, or teeth that don’t quite look like teeth.

Notice if the audio matches the mouth movement. Even a slight delay or disconnect between speech and lip movement is a major red flag, and looking back, that robotic cadence in the Taylor Swift clip was exactly this. Researchers found that humans can only detect AI-generated speech about 73% of the time, which sounds okay until you realize that means roughly one in four deepfake audio clips slips right past us.

Consider the source. Where did this video come from originally? Who posted it? Is there any identifiable context? A surprising or emotionally charged claim with no clear sourcing is worth slowing down on. A free giveaway from a major celebrity with zero official announcement anywhere? That’s a red flag hiding in plain sight.

None of these are foolproof, newer deepfakes are getting better at all of these things. Although, the point isn’t to become a forensic video expert, it’s to develop the habit of pausing before you react or share, especially with content that’s designed to make you feel something strongly.

What to do if something feels off

If a video is making a specific claim about a real person, go look for it somewhere else. Not just a different social media account, an actual news outlet or primary source. If something shocking genuinely happened, it’ll be covered. If you can’t find any corroborating reporting, that’s information. Had I taken ten seconds to search “Taylor Swift Le Creuset giveaway,” I would have found nothing, because it never happened.

You can also do a reverse image search on stills from a video using Google Lens, which sometimes surfaces the original context or earlier versions of the footage. It’s not a magic solution but it takes about fifteen seconds and has saved me from sharing something embarrassing more than once.

And honestly? It’s okay to just not share something you’re not sure about. That’s not cowardice. It’s just being a thoughtful person in a world where sharing something false has actual consequences for real people.

The bigger picture

I don’t want to end this on a doom-and-gloom note, because I actually think there’s something kind of empowering about understanding how this works. A lot of misinformation thrives on the fact that people feel overwhelmed and don’t know where to start. Once you understand that deepfakes work by exploiting how fast we scroll and how much we trust visual information, you have something real to work with. You’re not just a passive viewer anymore.

The technology is going to keep getting more convincing. The average American now encounters about 2.6 deepfake videos per day, with people ages 18–24 seeing even more, roughly 3.5 daily. Our ability to think critically, slow down, and ask basic questions doesn’t get outdated. That’s still the best tool we have.

So the next time your FYP serves you something wild, take an extra two seconds. Check the edges. Watch the eyes. Look for where it came from. It’s not that deep, it’s just paying attention.